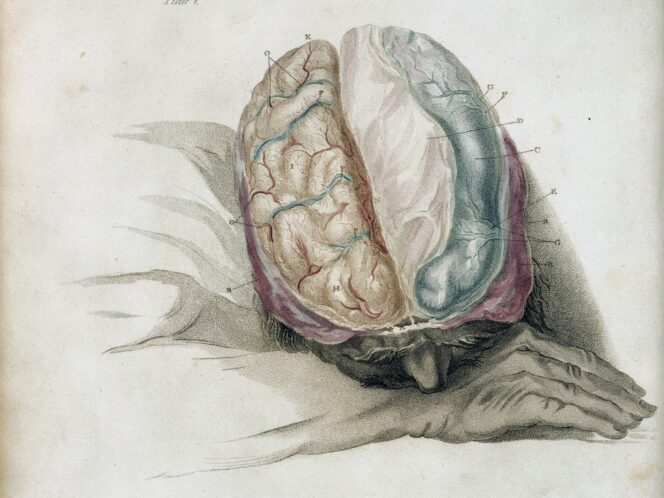

When the human mind starts to believe in something, it’s hard to get it to stop; facts and logical argumentation don’t do a lot. If we add to this our selective memory, which is full of untrue memories, we now have a psychological sketch of Homo sapiens, the so-called ‘wise man’.

It was supposed to be a breakthrough in medicine. The leadership of the Karolinska Institute, Sweden’s most important medical centre, would certainly be pleased that they had managed to recruit Dr Paolo Macchiarini. This Swiss-born Italian had developed an innovative method of implanting an artificial trachea. He was handsome and smooth-tongued, with a golden touch.

It’s just that he was also a fraud. As Carl Elliott wrote in the New York Review of Books, the dazzlingly tanned surgeon is the anti-hero of one of the greatest scandals in the history of contemporary medicine. His patients died one after another, often in agony, when their bodies rejected the plastic organs. One of the harshest critics of Macchiarini’s ‘method’, Professor Pierre Delaere of the Catholic University of Leuven, told Elliott: “If I had the option of a synthetic trachea or a firing squad, I’d choose the last option because it would be the least painful form of execution.”

Meanwhile, Macchiarini carried on cutting and implanting, and the Karolinska Institute—the same institution that awards the Nobel Prize in Physiology or Medicine—remained silent. Worse still, it disciplined critics of the handsome surgeon’s methods.

The gravity and effectiveness of the fairy-tale Macchiarini fed to those around him is best shown in the story of his former fiancée, Benita Alexander. Rings appeared, and a wedding was planned that would go down in history—Pope Francis himself would bless the union in Castel Gandolfo, Andrea Bocelli would sing, and among the guests would be Putin, the Obamas and Russell Crowe. The house of cards